How to Navigate the World of Data Analytics: Concepts, Data Migration, and Cross-Usage

In today's technology-rich business world, data analytics is of critical importance as it enables organisations to make informed decisions, thereby improving company efficiency and competitiveness.

In this article, we'll introduce you to important concepts and processes related to data migration to help you better navigate the exciting world of data analytics.

Key Concepts Explained

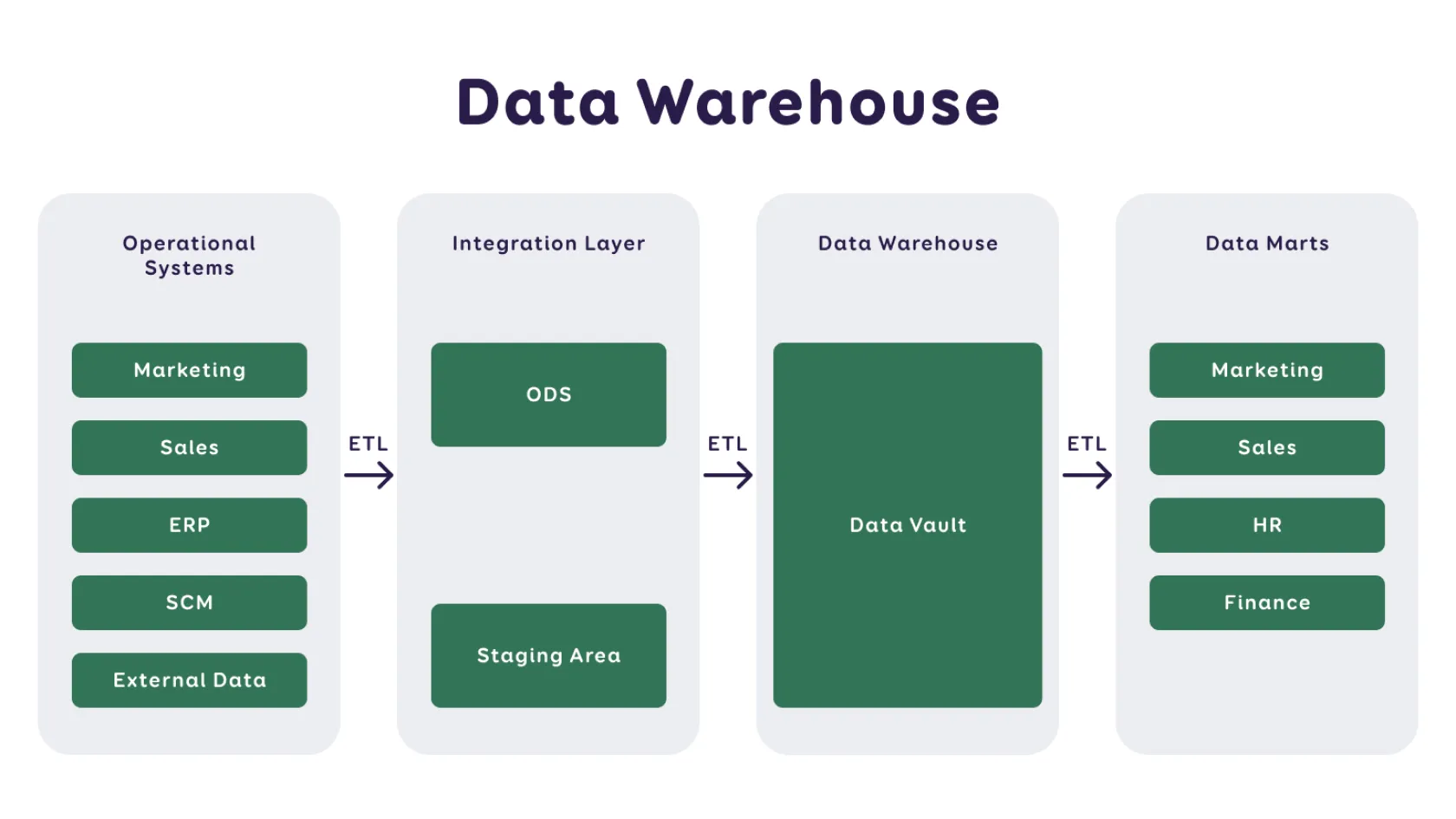

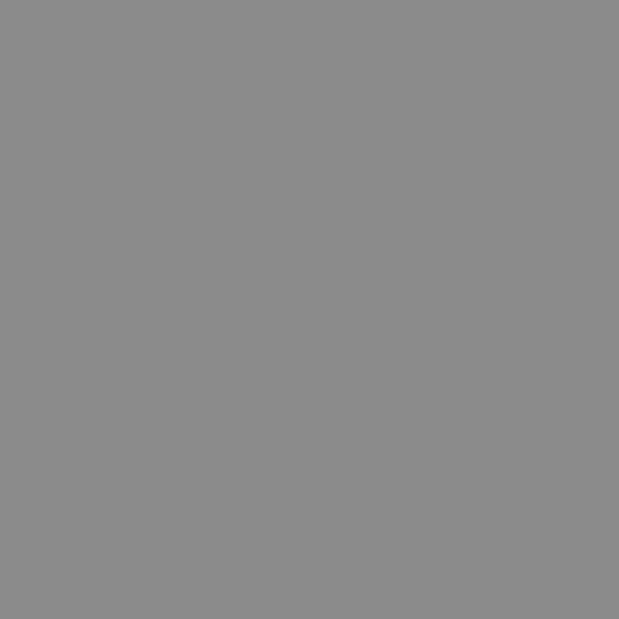

- Data Warehouse (DWH) – A collection of structured data gathered for a specific purpose, i.e., a database or databases. It is considered the central repository of an organisation's data.

- Data Lake – A collection of unprocessed (raw) data, often used as a primary source in machine learning. It may also contain an entire organisation's dataset, but unlike a data warehouse, it may not yet be deployed for a specific purpose.

- Big Data – Encompasses a variety of formats and unstructured data, such as web statistics, social media, sensors, text documents, audio, video, etc. While data warehouses are more about architecture, big data is more about technology.

- Data Mart – A smaller version of a data warehouse, created for a specific business area, allowing for more specific analysis.

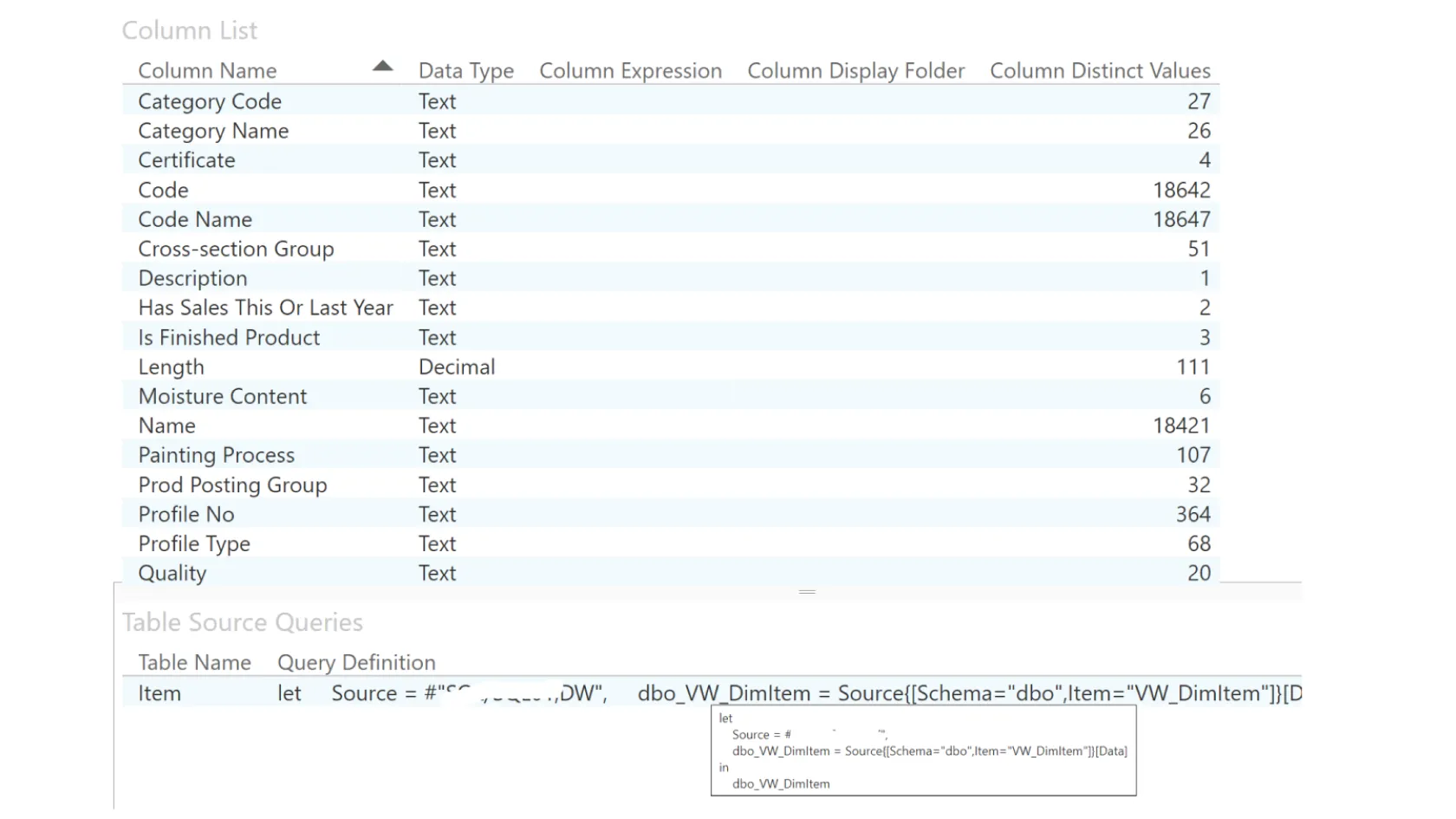

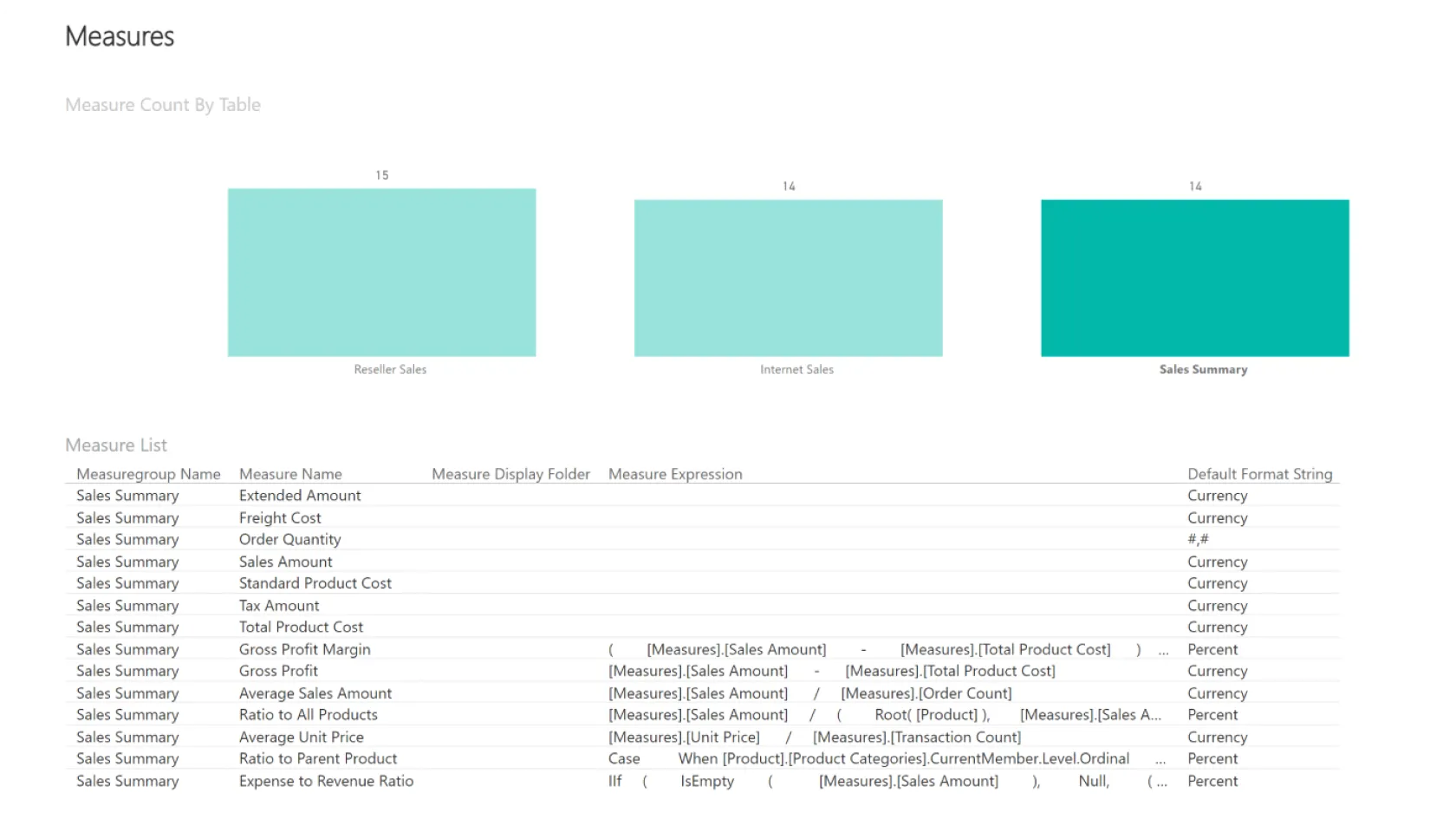

- Dataset (Semantic Model) – A layer of data with business concepts, situated between the data warehouse and reports, created for specific reporting needs (e.g., profit reports).

- Data Flow – The process of loading data where data is transported from one location to another. This process is essential for data integration and preservation.

- Masterdata – A central data collection used when primary information is not available from the information system. For example, if cost information for a product is not available in an application, a specialist can manually enter this information on a prepared form (Excel, App, etc.) and integrate it into the data warehouse.

In addition to managing individual data, the term masterdata is also used when it is necessary to link the same dataset from multiple original sources, such as customer registers from different countries' branches of a company. - ELT (Extract, Load, Transform) Methodology – The process begins with collecting data from the original source and the necessary data is lifted in an unprocessed form into the data warehouse. During the data transformation phase, data is enhanced or errors are corrected to ensure data accuracy and reliability.

After ELT, the data is linked into an optimized semantic model and presented to users in an understandable and suitable form. This newer methodology differs from the previously used ETL term in that transformations are done after loading.

The Journey of Data Analytics: From a Large Amount of Data to a Report

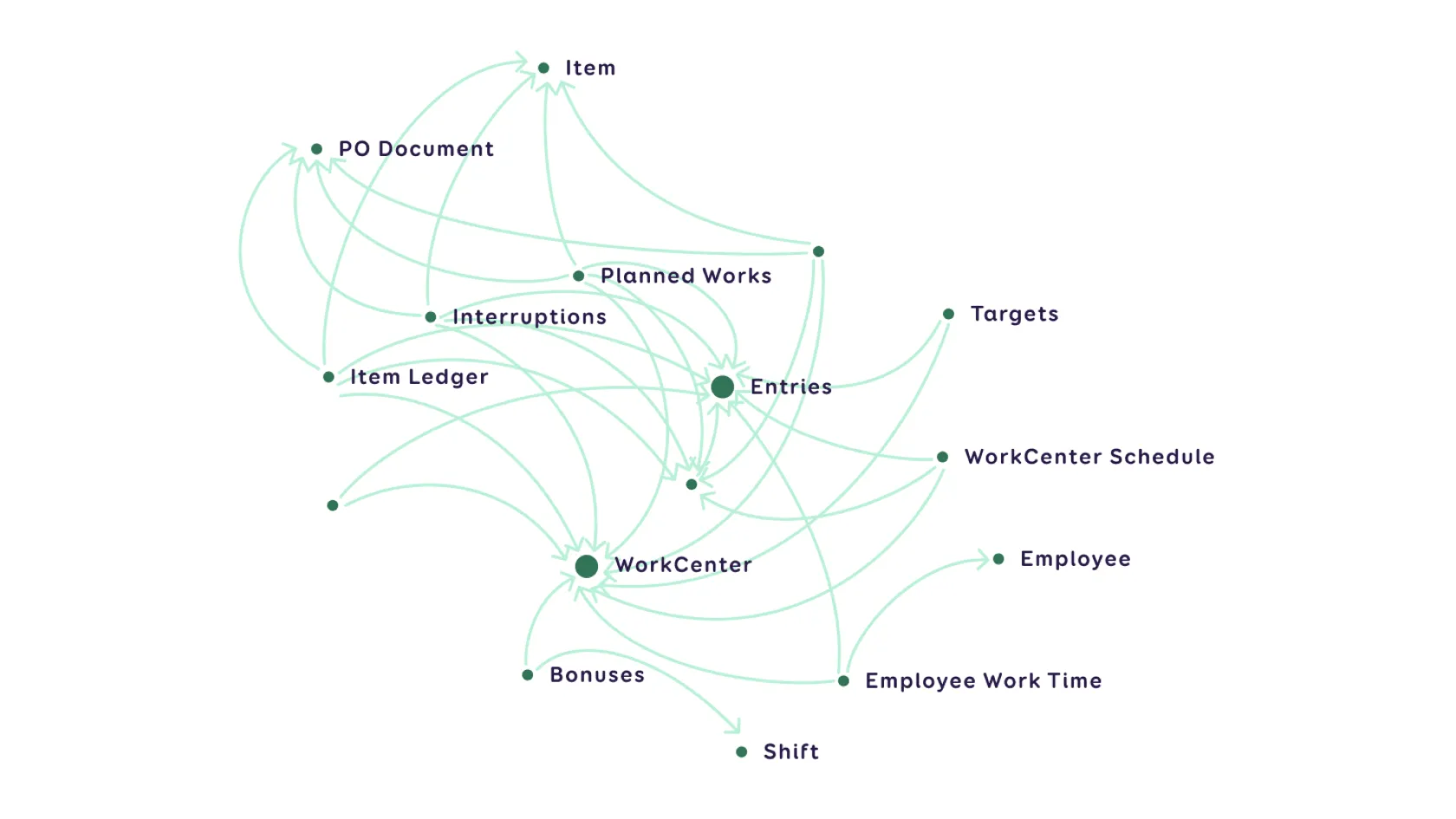

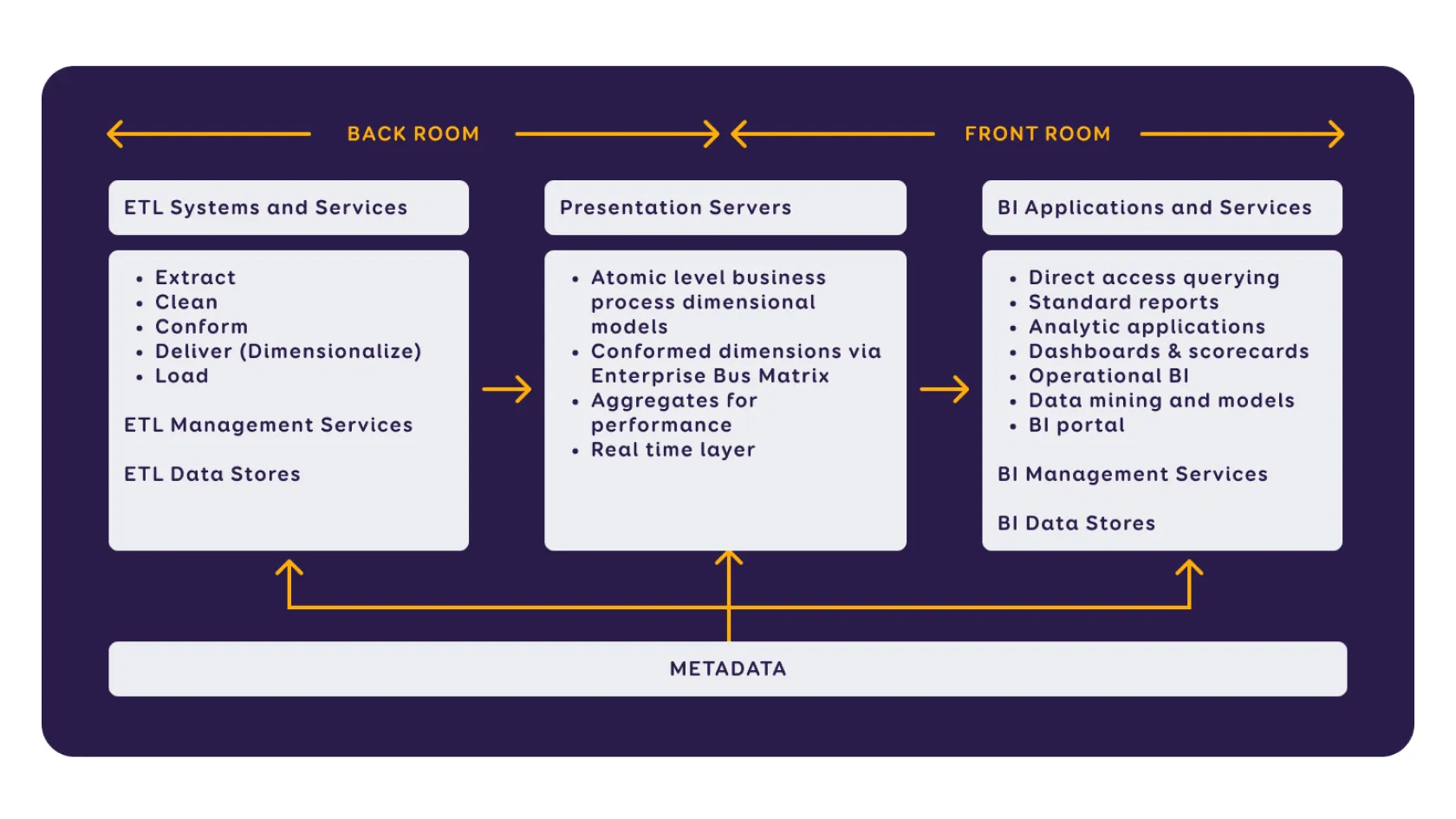

The Kimball methodology model illustrates the connections between the front-end and back-end, offering a visual overview of the process where raw data becomes reports and decision support. The process is divided into three stages:

- data preparation (ELT),

- the business model layer,

- presenting results with analytical capabilities.

To provide effective BI (Business Intelligence) solutions, our affiliate Intelex Insight has developed standards for designing data architecture.

These encompass principles, rules, and models for collecting data, describe the management and preservation of collected data, ensure data confidentiality and security, provide an overview of reporting, and prepare for user-side data analysis. Next, we'll explore what happens behind the scenes in data preparation.